This is extremely useful for double-checking any elements Frog reports on. The Frog will save the HTML for every page. Select both options here, as Google has some specific requirements that aren’t included in the schema org guidelines.īoth the options in this section are unticked by default, I always tick them: Validates the mark-up against Google’s own documentation. Tick "Google Rich Results Feature Validation." I tick all of the above options, so I can fully audit the schema of the site, no matter how it’s implemented.Ī great feature to check all schema validates against the official suggested implementation. Lots of interesting things can be in the headers.Į.g If a site uses dynamic content serving for desktop vs mobile, it should use the Vary HTTP Header.Įxtraction Tab Settings Tab - Structured DataĪll the elements in this section are unticked by default, I tick them all: I tick one option here over the default settings: Submit any you know about that aren't listed in the robots.txtĮxtraction Tab Settings Tab - Page Detailsīy default, all these elements are ticked and that’s how I recommend you keep them for most audits.Įxtraction Tab Settings Tab - URL Details Tick "Auto Discover XML Sitemaps via robots.txt"Īs many sites include a link to their XML sitemaps in robots, it’s a no brainer to click this, so you don’t have to manually add the Sitemap URL. Discover any URLs that are linked in XML Sitemaps but aren't linked on the site (orphan pages) No 404s, redirects, noindexed, canonicalised URLs.ī. Check if all important pages are included in sitemapsī. Otherwise I might miss external URLs which are 404s, miss discovering pages that are participating in link spam or have been hacked.īy default all 3 options in this section are unticked, I tick them all:Ī. I want the Frog to crawl all possible URLs.ī. I want to know if a site is using ”nofollow” so I can investigate & understand why they are using it on internal links.Ī. I want to discover as many URLs as possibleī. I always have this ticked, because if I’m doing a complete audit of a site, I also want to know about any subdomains there are.Ī. Leaving this unticked won’t crawl any subdomains the Frog my encounter linked. The Frog checks for lots of AMP issues!īy default, there are 4 boxes unticked here that I tick (Green ticks): A site could also be using AMP, but you might not realise it.ī. We want to discover all URLs and be able to audit multilingual/local setups, so tick this.Ī.

The alternate version of URLs may not be linked in the HTML body of the page, only in the href lang tags. We don't want to miss any pages, so tick this. Such as PDPs on ecommerce categories, or articles on a publishers site. There could be URLs only linked from deep paginated pages. You can crawl much bigger sites than in RAM mode.Īllocate as much RAM as you can, but always leave 2GB from your total RAM.īy default, there are 4 boxes unticked here that I tick

If the Frog or your machine crashes the crawl is autosaved.ī. If you have an SSD, use Database mode because:Ī.

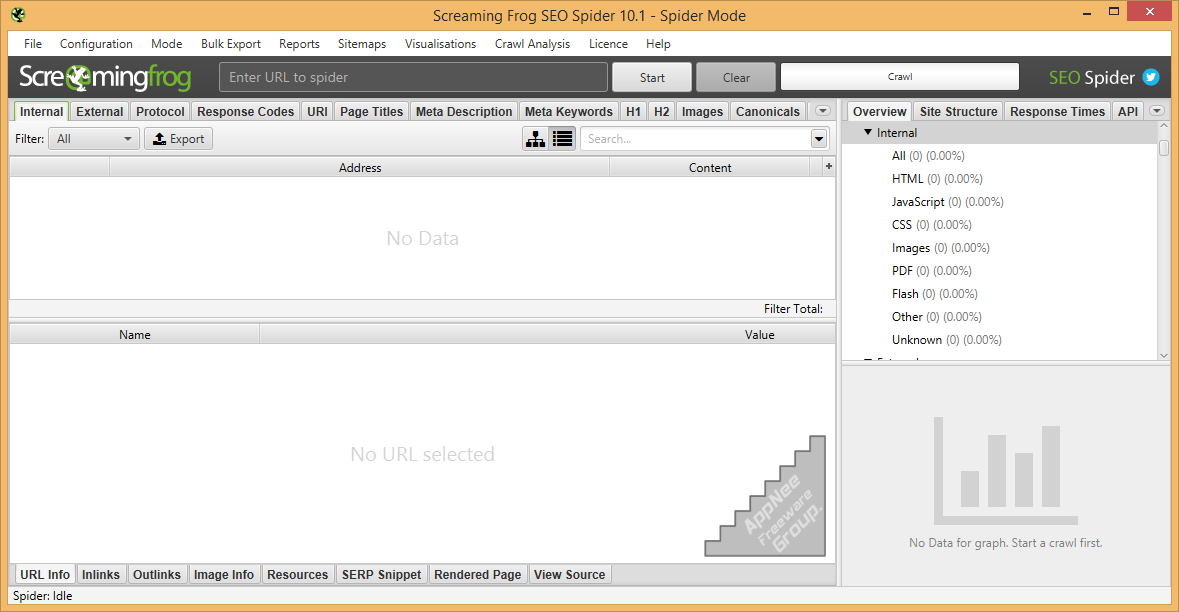

Here's the best bits from it: (There's lots more in the full guide, so please check that) However, having trained people on using it since 2010, I know that new users struggle to understand what the best settings are for doing audits, often missing issues due to the default settings. Screaming Frog, probably the most used SEO tool in the industry. Message the mods and we'll get back to you soon. Look at your username up above in the sidebar and click the little (edit) link to assign yourself some flair. Please do not put URLs or other spammy material into your user flair. Edit the text to include your job title (or whatever identifiable information you want). The color represents your type of employment. You can contribute to the wiki by having over 100 karma in this subreddit! User Flair Contribute to the Wiki! 100 Karma or Ask! SEO for Grown UpsĬheck out past /r/BigSEO AMAs! Other subreddits we likeĬheck out this subreddit's wiki for lots of information about SEO. Read our guide for new users more details. New posters and posters that fall below that threshold will need to have their posts manually approved. To remove spam, this subreddit requires that contributors' accounts are over 1 day old and have at least 3 comment karma. Our definition of spam is "self-marketing orientated content" and "low grade content that offers no value for the average professional". Please click report to report abuse, spam or anything that you'd like to be flagged for deletion. 14 April - LINKBUILDING: Amanda Milligan, Head of Marketing at Stacker Studioįollow on Twitter to get a feed of the best threads from /r/bigseo there, including some older threads you may have missed.A community for professional Agency, In-House, and self-employed SEOs looking to discuss strategy, share ideas, case-studies, and learn.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed